How to Set up a Product Development Environment for Microservices

TL;DR

Option #1: Spin up the full system locally

Option #2: Spin up the full system in cloud

Option #3: Spin up all business logic locally, route cloud services to laptop

Option #4: make local code for single service available in remote cluster

Telepresence

Questions?

How do you set up a product development environment for microservices and Kubernetes? While the tooling and infrastructure for building traditional web applications has been highly optimized over time, the same cannot be said for microservices.

In particular, setting up a product development environment for microservices can be considerably more complex than a traditional web application:

- Your service likely relies on resources like a database or a queue. In production these will often be provided by your cloud provider, e.g. AWS RDS for databases or Google Pub/Sub for publish/subscribe messaging.

- Your service might rely on other services that either you or your coworkers maintain. For example, your service might rely on an authentication service.

These external dependencies add complexity to creating and maintaining a development environment. How do you set up your service so you can code and test it productively when your service depends on other resources and services?

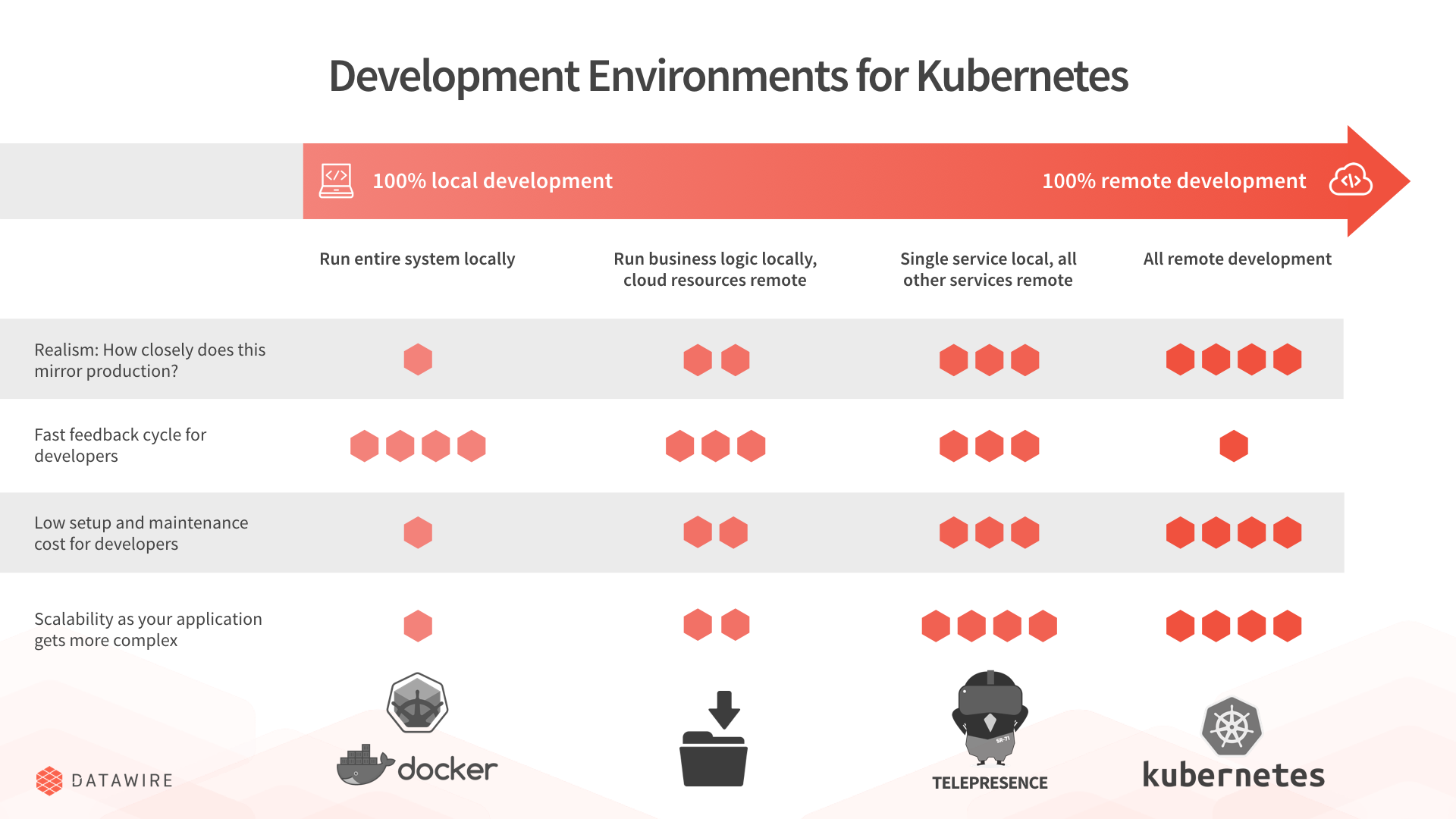

This article discusses the various approaches to setting up a development environment for Kubernetes, and the tradeoffs.

TL;DR

Option #1: Spin up the full system locally

Using tools like minikube or Docker Compose you can spin up multiple services locally. For resources like databases you use Docker images that match them:

- For a database like PostgreSQL you can use the Docker image as a replacement for AWS RDS. It won't quite match, but much of the time it will be close enough.

postgres - For other cloud resources you can often spin up emulators, e.g. Google provides an emulator for Google Pub/Sub that you can run locally.

LaptopSource codeYour serviceDependency service 1Dependency service 2postgres Docker imageYet another serviceEven more serviceQueue emulator

If you’re unable to use containers, Daniel Bryant outlines a number of other approaches for developing microservices locally with various tradeoffs.

Pros:

- Fast, local development.

Cons:

- Some resources can be hard to emulate locally (e.g., Amazon Kinesis).

- You need a way to set up and run all your services locally, and keep this setup in sync with how you run services in production.

- Not completely realistic. Depending on the option chosen, there can be major or minor differences from production, e.g., the Docker image doesn’t quite match the AWS RDS PostgreSQL configuration, mocks/stubs are not a substitute for running a full-blown service.

postgres - You potentially need to spin up the whole system locally, which will become difficult once you have a large enough number of services and resources. With minikube in particular, this threshold is fairly low.

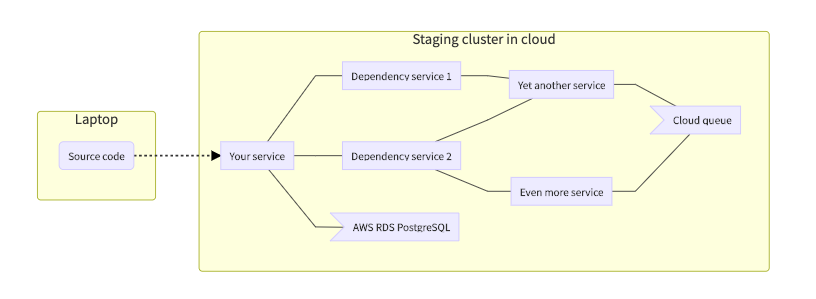

Option #2: Spin up the full system in cloud

You spin up a realistic cluster in the cloud, a staging/testing environment. It might not have as much nodes as the production system, since load will be much lower, but it is quite close. Your code also runs in this cluster.

Staging cluster in cloudLaptopSource codeYour serviceDependency service 1Dependency service 2AWS RDS PostgreSQLYet another serviceEven more serviceCloud queue

Pros:

- Very realistic.

Cons:

- Development is slower since you need to push code to cloud after every change in order to try it out.

- Using tools like debuggers is more difficult since the code all runs remotely.

- Getting log data for development is considerably more complex.

- Need to pay for additional cloud resources, and spinning up new clusters and resources is slow.

- As a result, you may end up using a shared staging environment rather than one per-developer, which reduces isolation.

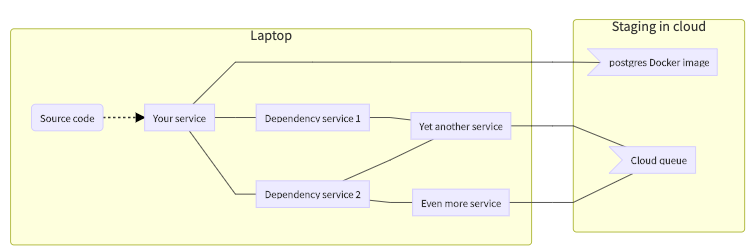

Option #3: Spin up all business logic locally, route cloud services to laptop

As with option #1, all services run locally. However, cloud resources (AWS RDS etc.) are made available locally on your development machine via some sort of tunneling or proxying, e.g. a VPN.

Pros:

- Fast, local development.

- Very realistic.

Cons:

- You need a way to set up and run all your services locally, and keep this setup in sync with how you run services in production.

- Need to pay for additional cloud resources, and spinning up new cloud resources is slow.

- As a result, you may end up using a shared staging environment rather than one per-developer, which reduces developer isolation.

- You potentially need to spin up the whole system locally, which will become difficult once you have a large enough number of services.

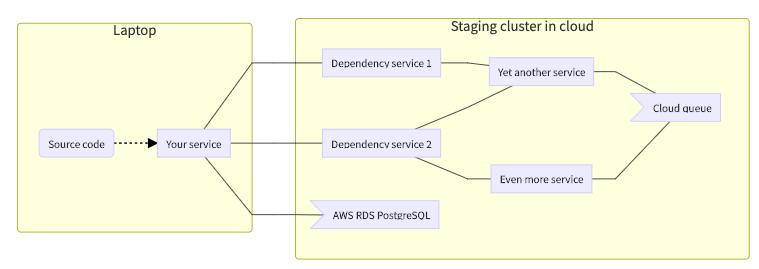

Option #4: make local code for single service available in remote cluster

You spin up a realistic cluster in the cloud, a staging/testing environment. But your service runs locally, and is proxied/VPNed into the remote cluster.

Pros:

- Fast, local development.

- Very realistic.

- Simple local setup.

Cons:

- Need to pay for additional cloud resources, and spinning up new cloud resources is slow.

- As a result, you may end up using a shared staging environment rather than one per-developer, which reduces developer isolation. This is mitigated by the fact that your changes (and that of others) is local to your environment.

- Configuring a proxy or VPN that works with your cluster can be complex.

Telepresence

At Datawire, we’ve elected to go with option #4. We develop services locally on our laptop, while shared services run in a cloud-hosted Kubernetes instance (we also use Amazon resources such as RDS).

We found that writing a simple, easy-to-use proxy to be fairly complex, so we open sourced the code so you can do the same in a few minutes. If you’re interested, visit our Telepresence page to learn more.

Questions?

We’re happy to help! Learn more about microservices, start using our open source Edge Stack API Gateway, or contact our sales team.